Avoiding Common Pitfalls: A Learner's Guide to Mastering Docker

Aspiring DevOps Engineer with hands-on experience in cloud platforms, automation, CI/CD pipelines, containerization, and infrastructure as code. Skilled in AWS, Docker, Kubernetes, Terraform, Ansible, and modern monitoring tools. Experienced with Linux administration, cPanel hosting environments, and deployment workflows. Additionally trained in full-stack development using React and FastAPI.

Learning Docker was one of the most transformative experiences in my DevOps career. Like many developers, I started with skepticism and confusion, but ended up falling in love with containers. Here's my honest account of the challenges I faced and how I overcame them.

The Beginning: Why Docker?

Before Docker, my development workflow was plagued with issues:

Inconsistent environments across development, staging, and production

The infamous "It works on my machine" syndrome

Complex dependency management that broke with every system update

Slow onboarding for new team members who spent days setting up their environment

I knew I needed a better solution, and Docker kept coming up in every DevOps conversation.

Challenge 1: Understanding Containers vs Virtual Machines

The Confusion

My first hurdle was understanding why containers were different from virtual machines. I kept thinking of them as "lightweight VMs" which led to many misconceptions:

Why don't containers have their own kernel?

How can they be so small if they contain an entire OS?

What happens to my data when a container stops?

The Breakthrough

The breakthrough came when I stopped thinking of containers as mini-VMs and started seeing them as isolated processes. The key insights were:

Containers share the host kernel, they don't virtualize hardware

Container images contain only the application and its dependencies, not a full OS

Containers are ephemeral by design - data persistence requires volumes

# This simple command helped me understand container isolation

docker run -it alpine sh

# Inside: ps aux shows only the shell process, not the entire OS

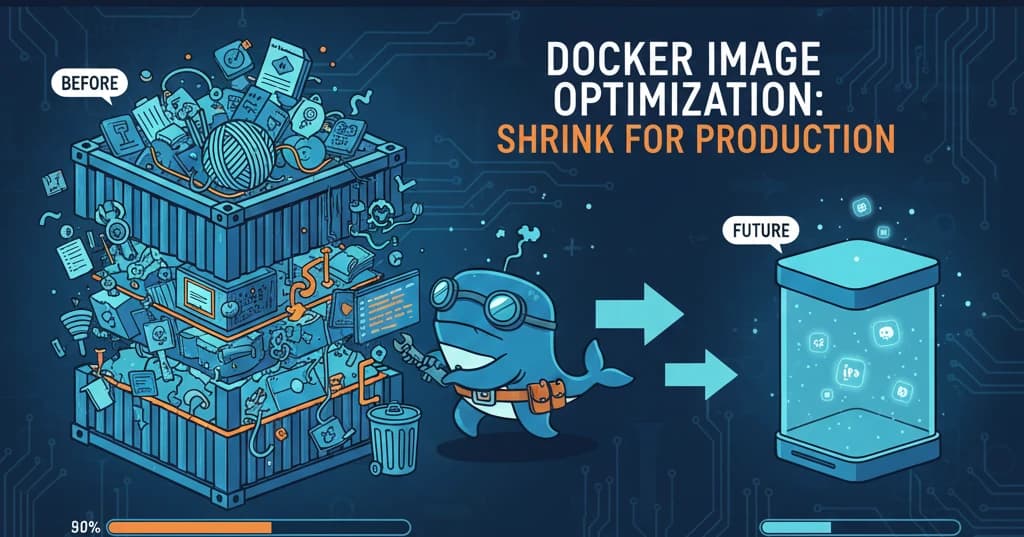

Challenge 2: Writing Efficient Dockerfiles

The Problem

My first Dockerfiles were disasters - 2GB images that took 20 minutes to build. Every small code change triggered a complete rebuild.

The Solution: Layer Caching and Multi-stage Builds

Learning about Docker's layer caching system was game-changing:

# ❌ BAD: Every code change rebuilds everything

FROM node:18

WORKDIR /app

COPY . .

RUN npm install

CMD ["node", "index.js"]

# ✅ GOOD: Dependencies cached separately from code

FROM node:18-alpine AS builder

WORKDIR /app

COPY package*.json ./

RUN npm ci --only=production

FROM node:18-alpine

WORKDIR /app

COPY --from=builder /app/node_modules ./node_modules

COPY . .

USER node

CMD ["node", "index.js"]

Key lessons learned:

Order instructions from least to most frequently changing

Use

.dockerignoreto exclude unnecessary filesMulti-stage builds dramatically reduce image size

Always use specific version tags, never

latest

Challenge 3: Docker Networking

The Confusion

Docker networking was initially baffling. Why couldn't my containers talk to each other? Why did localhost not work the way I expected?

Understanding Network Types

| Network Type | Use Case | My Experience |

| Bridge | Default for standalone containers | Works great for local development |

| Host | Performance-critical applications | Used for monitoring tools |

| Overlay | Multi-host communication | Essential for Docker Swarm |

| None | Security isolation | Rarely used |

The key insight: container names become DNS names within the same network:

# docker-compose.yml

services:

web:

build: .

depends_on:

- db

environment:

- DATABASE_URL=postgres://db:5432/myapp # 'db' resolves to the database container

db:

image: postgres:15-alpine

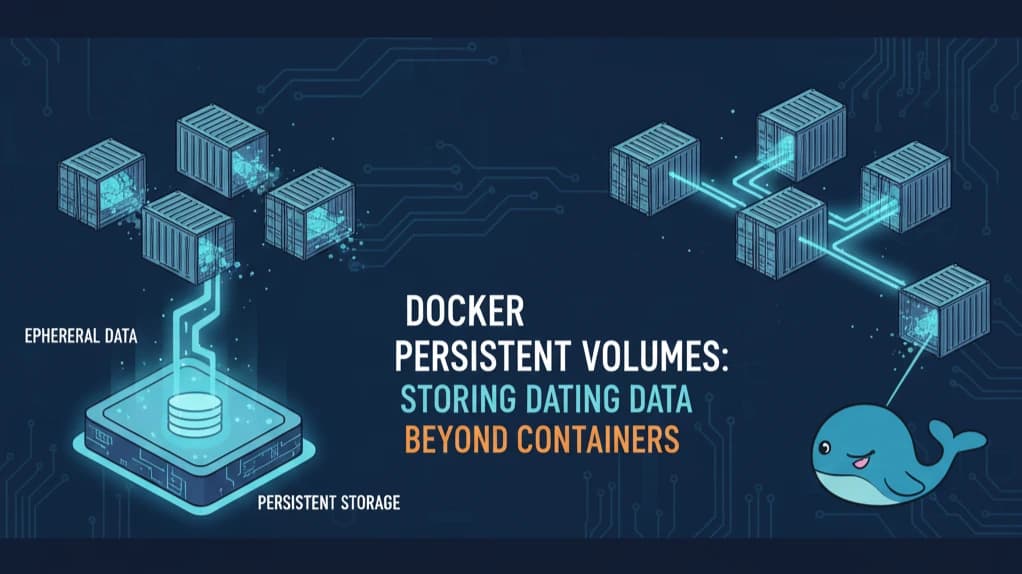

Challenge 4: Data Persistence with Volumes

The Problem

I lost important data multiple times before understanding Docker volumes. Containers are ephemeral, but my database data shouldn't be!

The Solution

# Named volumes - Docker manages the location

docker volume create mydata

docker run -v mydata:/app/data myimage

# Bind mounts - You control the location

docker run -v /host/path:/container/path myimage

When to use what:

Named volumes: Production data, databases, shared data between containers

Bind mounts: Development (hot reload), configuration files

Challenge 5: Docker Compose Complexity

Growing Pains

As my applications grew, managing multiple containers with docker run became unwieldy. Docker Compose seemed like magic at first, but the YAML syntax had its quirks.

Mastering Compose

version: '3.8'

services:

app:

build:

context: .

dockerfile: Dockerfile

ports:

- "3000:3000"

environment:

- NODE_ENV=development

volumes:

- .:/app

- /app/node_modules # Anonymous volume to preserve node_modules

depends_on:

db:

condition: service_healthy

db:

image: postgres:15-alpine

environment:

- POSTGRES_PASSWORD=secret

volumes:

- postgres_data:/var/lib/postgresql/data

healthcheck:

test: ["CMD-SHELL", "pg_isready -U postgres"]

interval: 10s

timeout: 5s

retries: 5

volumes:

postgres_data:

Key Lessons Learned

Looking back at my Docker journey, here are the most important lessons:

1. Start Simple, Add Complexity Gradually

Don't try to learn Docker Swarm, Kubernetes, and multi-stage builds on day one. Master the basics first:

docker run,docker build,docker psSimple Dockerfiles

Docker Compose for multi-container apps

2. Read the Official Documentation

The Docker docs are excellent. I wasted weeks on blog posts with outdated information before discovering the official tutorials.

3. Practice with Real Projects

Tutorial hell is real. I learned the most by containerizing my own projects and fixing the issues that came up.

4. Join the Community

The Docker community on Discord, Reddit, and Stack Overflow helped me solve countless issues. Don't be afraid to ask "stupid" questions.

5. Embrace the Philosophy

Containers are meant to be:

Immutable: Don't modify running containers, rebuild them

Ephemeral: They can be stopped and started without data loss (if you use volumes)

Declarative: Infrastructure as code, not manual setup

Tools That Helped Me

Docker Desktop: Essential for local development

Dive: Analyze image layers and find bloat

Lazydocker: Terminal UI for managing containers

Portainer: Web UI for Docker management

Conclusion

Docker transformed how I think about application deployment. The initial learning curve was steep, but the benefits are immense:

Consistent environments everywhere

Faster onboarding for new team members

Easier scaling and deployment

Better isolation and security

If you're starting your Docker journey, embrace the confusion - it's part of the process. Every error message is a learning opportunity, and the container ecosystem keeps getting better.

The best time to learn Docker was five years ago. The second best time is now.

What challenges did you face learning Docker? I'd love to hear your stories!